Artboard 1

Outside contributors’ opinions and analysis of the most important issues in politics, science, and culture.

What do we expect of content moderation? And what do we expect of platforms?

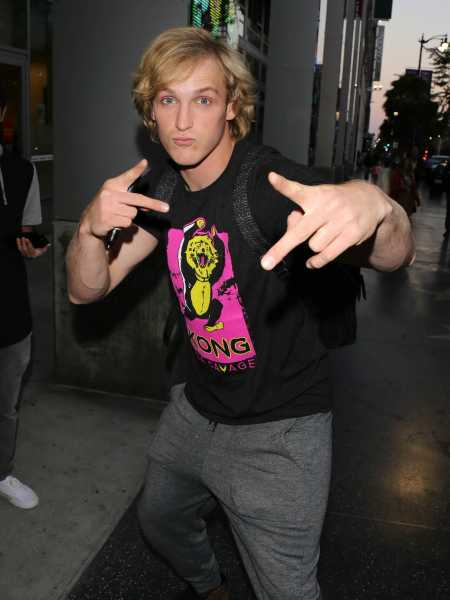

Last week, Logan Paul, a 22-year-old YouTube star with 15 million-plus subscribers, posted a controversial video that brought these questions into focus. Paul’s videos, a relentless barrage of boasts, pranks, and stunts, have garnered him legions of adoring fans. But he faced public backlash after posting a video in which he and his buddies ventured into the Aokigahara Forest of Japan, sometimes called the “suicide forest,” only to find the body of a young man who appeared to have recently hanged himself.

Rather than turning off the camera, Paul continued his antics, pinballing between awe and irreverence, showing the body up close and then turning the attention back to his own reaction. The video lingered on the body, including close-ups of his swollen hand. Paul’s reactions were self-centered and cruel.

After a blistering wave of criticism in the comment threads and on Twitter, Paul removed the video and issued a written apology, which was itself criticized for not striking the right tone. A somewhat more heartfelt video apology followed. He later announced he would be taking a break from YouTube. YouTube went on to strip Paul from its top-tier monetization system, and announced yesterday that Paul would face “further consequences.”

The controversy surrounding Paul and his video highlights the undeniable need, now more than ever, to reconsider the public responsibilities of social media platforms. For too long, platforms have enjoyed generous legal protections and an equally generous cultural allowance: to be “mere conduits” not liable for what users post to them.

In the shadow of this protection, they have constructed baroque moderation mechanisms: flagging, review teams, crowd workers, automatic detection tools, age barriers, suspensions, verification status, external consultants, blocking tools. They all engage in content moderation but are not obligated to; they do it largely out of sight of public scrutiny and are held to no official standards as to how they do so. This needs to change, and it is beginning to.

But in this crucial moment, one that affords such a clear opportunity to fundamentally reimagine how platforms work and what we can expect of them, we might want to get our stories straight about what those expectations should be.

Content moderation, and different kinds of responsibility

YouTube weathered a series of controversies last year, many of which were about children, both their exploitation and their vulnerability as audiences. There was the controversy about popular vlogger PewDiePie, condemned for including anti-Semitic humor and Nazi imagery in his videos. Then there were the videos that slipped past the stricter standards YouTube has for its Kids app: amateur versions of cartoons featuring well-known characters with weirdly unsettling narrative third acts.

That was quickly followed by the revelation of entire YouTube channels full of videos of children being mistreated, frightened, and exploited — for instance, young children in revealing clothing restrained with ropes or tape — that seem designed to skirt YouTube’s rules against violence and child exploitation.

Just days later, BuzzFeed also reported that YouTube’s autocomplete feature displayed results that seemed to point to child sexual exploitation. (If you typed “how to have,” you’d get “how to have s*x with your kids” in autofill.) Earlier in the year, videos were excluded from the more restrictive Kids mode that arguably should not have been, including videos about LGBTQ and transgender issues.

YouTube representatives have apologized for all of these missteps and promised to increase the number of moderators reviewing their videos, aggressively pursue better artificial intelligence solutions, and remove advertising from some of the questionable channels.

Platforms like YouTube assert a set of normative standards — guidelines by which users are expected to comport themselves. It is difficult to convince every user to honor these standards, in part because the platforms have spent years simultaneously promising users an open and unfettered playing field, inviting them to do or say whatever they want.

And it is difficult to enforce these standards, in part because the platforms have few of the traditional mechanism of governance: They can’t fire content creators as if they were salaried producers. The platforms only have the terms of service and the right to delete content and suspend users. On top of that, economic incentives encourage platforms to be more permissive than they claim to be, and to treat high-value producers differently from the rest.

Incidents like the exploitative videos of children, or the misleading amateur cartoons, take advantage of this system. They live amid this enormous range of videos, some subset of which YouTube must remove. Some come from users who don’t know or care about the rules, or find what they’re making perfectly acceptable. Others are deliberately designed to slip past moderators, either by going unnoticed or by walking right up to, but not across, the community guidelines. These videos sometimes require hard decisions to be made about the right to speak and the norms of the community.

Logan Paul’s video or PewDiePie’s racist outbursts are of a different sort. As was clear in the news coverage and the public outrage, critics were troubled by Paul’s failure to consider his responsibility to his audience, to show more dignity as a video maker, and to choose sensitivity over sensationalism.

The fact that he has 15 million subscribers, a lot of them young, led many to say that he (and, by implication, YouTube) has an even greater responsibility than other YouTubers. These sound more like traditional media concerns, focusing on the effects on audiences, the responsibilities of producers, and the liability of providers. This could just as easily be a discussion about Ashton Kutcher and an episode of Punk’d. What would Kutcher’s, his production team’s, and MTV’s responsibility be if he had similarly crossed the line with one of his pranks?

But MTV was in a structurally different position than YouTube. We expect MTV to be accountable for a number of reasons: It had the opportunity to review the episode before broadcasting it, it employed Kutcher and his team, and it chose to hand him the megaphone in the first place. While YouTube also affords Paul a way to reach millions, and he and YouTube share advertising revenue, these offers are in principle made to all YouTube users.

YouTube is a distribution platform, not a distribution bottleneck — or it is a bottleneck of a very different type. This does not mean we cannot or should not hold YouTube accountable. We could decide as a society that we want YouTube to meet exactly the same responsibilities as MTV, or more. But we must take into account that these structural differences change not only what YouTube can do but also the rationale for enforcing such standards.

Is content moderation the right mechanism to manage this responsibility?

Some argued that YouTube should have removed the video before Paul did. As best as we can tell, YouTube reviewers, on first glance, did not. (It seems the video was reviewed and was not removed — we know this only based on this evidence from Twitter. If you want to see the true range of disagreement about what YouTube should have done, just read down the lengthy thread of comments that followed this tweet.

Paul did receive a “strike” on his account, a kind of warning. And while many users flagged the video as objectionable, many, many others watched it, enough for it to trend. In its PR response to the incident, a YouTube representative said it should have taken the video down for being “shocking, sensational or disrespectful.”

But it is not self-evident that Paul’s video violates YouTube’s policies. The platform’s rule against graphic imagery presented in a sensational manner, like all of its rules, is purposefully broad. We can argue that the rule’s breadth is what lets videos like this slip by, or that YouTube is rewarded financially by letting them slide, or that vague rules makes the work of moderators harder. But I don’t think we can say that, on its face, the video simply did violate the rule.

And there’s no simple answer as to where such lines should be drawn. Every bright line YouTube might draw will be plagued with “what abouts.” Is it that corpses should not be shown in a video? What about news footage from a battlefield — even footage filmed by amateurs? What about public funerals? Should the prohibition be specific to suicide victims, out of respect?

From the comments from critics, it was Paul’s blithe, self-absorbed commentary, the tenor he took about the suicide victim he found, as much as showing the body itself, that was so troubling. Showing the body, lingering on its details, was part of Paul’s casual indifference, but so were his thoughtless jokes and exaggerated reactions.

It would be reasonable to argue that YouTube should allow a tasteful documentary about the Aokigahara Forest, concerned about the high rates of suicide among Japanese men. Such a video might even, for educational or provocative reasons, include images of the body of a suicide victim, or evidence of their deaths. In fact, YouTube already has some videos of this sort, of varying quality. (See 1, 2, 3, 4.)

If so, then what critics may be implying is that YouTube should be responsible for distinguishing between the insensitive versions from the sensitive ones. Again, this sounds more like the kinds of expectations we had for television networks — which is fine if that’s what we want, but we should admit that this would be asking much more from YouTube than we might think.

Is it so certain that YouTube should have removed this video on our behalf? I do not mean to imply that the answer is no, or that it is yes. I’m only noting that this is not an easy case to adjudicate . Another way to put it is, what else would YouTube have to remove, as sensational or insensitive or repellent or in poor taste, for Paul’s video to have counted as a clear and uncontroversial violation?

As a society, we’ve struggled with this very question long before social media. Should the news show the coffins of US soldiers as they’re returned from war? Should news programs show the grisly details of crime scenes? When is the typically too graphic video acceptable because it is newsworthy, educational, or historically relevant? Not only is the answer far from clear but it differs across cultures and periods. Whether this video should be shown is disputed, and as a society, we need to have the dispute; it cannot be answered for us by YouTube alone.

How exactly YouTube is complicit in the choices of its stars

This is not to suggest that platforms bear no responsibility for the content they help circulate. Far from it. YouTube is implicated — it affords the opportunity for Logan to broadcast his tasteless video, help him gather millions of viewers who will have it instantly delivered to their feed, design and tune the recommendation algorithms that amplify its circulation, and profit enormously from the advertising revenue it accrues.

Some critics are doing the important work of putting platforms under scrutiny to better understand the way producers and platforms are intertwined. But it is awfully tempting to draw too simple a line between the phenomenon and the provider, to paint platforms with too broad a brush. The press loves villains, and YouTube is one right now.

But we err when we draw these lines of complicity too cleanly. Yes, YouTube benefits financially from Logan Paul’s success. That by itself does not prove complicity; it needs to be a feature of our discussion about complicity. We might want revenue sharing to come with greater obligations on the part of the platform. Or we might want platforms to be shielded from liability or obligation no matter what the financial arrangement. We might also want equal obligations whether there is revenue shared or not. Or we might want obligations to attend to popularity rather than revenue. These are all possible structures of accountability.

It is easy to say that YouTube drives vloggers like Paul to be more and more outrageous. If video makers are rewarded based on the number of views, whether that reward is financial or just reputational, it stands to reason that some video makers will look for ways to increase those numbers.

But it is not clear that metrics of popularity necessarily or only lead to creators becoming more and more outrageous, and there’s nothing about this tactic that is unique to social media. Media scholars have long noted that being outrageous is one tactic producers use to cut through the clutter and grab viewers, whether it’s blaring newspaper headlines, trashy daytime talk shows, or sexualized pop star performances. That is hardly unique to YouTube.

And YouTube video makers are pursuing a number of strategies to seek popularity and the rewards therein, outrageousness being just one. Many more seem to depend on repetition, building a sense of community or following, interacting with individual subscribers, and the attempt to be first. While overcaffeinated pranksters like Logan Paul might try to one-up themselves and their fellow bloggers, that is not the primary tactic for unboxing vidders or Minecraft world builders or fashion advisers or lip syncers or television recappers or music remixers.

Others, like Vox’s Aja Romano, see Paul as part of a “toxic YouTube prank culture” that migrated from Vine, which is another way to frame YouTube’s responsibility. But a genre may develop, and a provider profiting from it may look the other way or even encourage it. That does not answer the question of what responsibility they have for it; it only opens it.

To draw too straight a line between YouTube’s financial arrangements and Logan Paul’s increasingly outrageous shenanigans misunderstands both of the economic pressures of media and the complexity of popular culture. It ignores the lessons of media sociology, which makes clear that the relationship between the pressures imposed by industry and the creative choices of producers is complex and dynamic. And it does not prove that content moderation is the right way to address this complicity.

The right kind of reckoning

Paul’s video was in poor, poor taste. And I find this entire genre of boffo, entitled, show-off masculinity morally problematic and just plain tiresome. And while it may sound like I am defending YouTube, I am definitely not. Along with the other major social media platforms, YouTube has a greater responsibility for the content it circulates than it has thus far acknowledged; it has built a content moderation mechanism that is too reactive, too permissive, and too opaque, and it is due for a public reckoning.

Content moderation should be more transparent, and platforms should be more accountable, not only for what traverses their system but the ways in which they are complicit in its production, circulation, and impact. Even so, content moderation is too much modeled on a customer service framework: We provide the complaints, and the platform responds. This may be good for some kinds of problems, but it’s proving insufficient when the disputes are moral, cultural, contested, and fluid.

The responsibility of platforms may not be to merely moderate “better”; they may need to facilitate the collective and collaborative deliberation that moderation really requires. Platforms could make spaces for that deliberation to happen, provide data on complaints so we know where our own concerns stand amid everyone else’s, and pair their decisions with explanations that can themselves be debated.

In the past few years, the workings of content moderation and its fundamental limitations have come to light, and this is good news. But it also seems we are too eager to blame all things on content moderation, and to expect platforms to display a perfectly honed moral outlook every time we are troubled by something we find there. Acknowledging that YouTube is not a mere conduit does not imply that it is exclusively responsible for everything available there. After a decade of embracing social media platforms as key venues for entertainment, news, and public exchange, we are still struggling to figure out exactly what we expect of them.

This essay is adapted from a post at Culture Digitally.

Tarleton Gillespie is a principal researcher at Microsoft Research New England, and an affiliated associate professor in the Department of Communication at Cornell University. His book, Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions that Shape Social Media, will be published in May 2018 by Yale University Press. Many thanks to Dylan Mulvin for assistance in the writing of this article. Find Gillespie on Twitter @TarletonG.

The Big Idea is Vox’s home for smart discussion of the most important issues and ideas in politics, science, and culture — typically by outside contributors. If you have an idea for a piece, pitch us at [email protected].

Sourse: vox.com